Anthropic’s new SpaceX compute deal gives Claude more capacity, higher Claude Code limits, and stronger Claude Opus API throughput. Here is what it means for users, developers, Codex, GitHub Copilot, and the OpenAI vs Anthropic model war.

Anthropic has just made one of the most important moves in the AI race. The company has signed a compute deal with SpaceX that gives Claude access to the full compute capacity of SpaceX’s Colossus 1 data center. According to Anthropic, the deal brings more than 300 megawatts of new capacity and more than 220,000 NVIDIA GPUs within the month. This is not a small cloud upgrade. This is frontier AI infrastructure at a scale that can directly change how many users Claude can serve, how long developers can work inside Claude Code, and how much throughput companies can push through Claude Opus APIs.

We’ve agreed to a partnership with @SpaceX that will substantially increase our compute capacity.

— Claude (@claudeai) May 6, 2026

This, along with our other recent compute deals, means that we’ve been able to increase our usage limits for Claude Code and the Claude API.

The timing is not random. Claude has become one of the most respected AI products for reasoning, writing, research, and coding. But in the developer world, respect is not enough. Developers need tools that stay available during long coding sessions. They do not want to hit a limit while debugging production code, refactoring a large repository, or building a feature with an AI agent. Anthropic’s SpaceX deal is a direct answer to that problem.

What Anthropic actually announced

Anthropic announced three immediate upgrades for Claude users. First, it is doubling Claude Code’s five-hour rate limits for Pro, Max, Team, and seat-based Enterprise plans. Second, it is removing the peak-hours limit reduction for Claude Code on Pro and Max accounts. Third, it is considerably raising API rate limits for Claude Opus models.

See Also: Developers Are Switching From Claude Code to Codex

This matters because Claude Code is not used like a normal chatbot. A normal chatbot might answer a question and stop. Claude Code is expected to behave more like a coding partner. It reads files, edits code, reasons through bugs, runs long sessions, and helps users move from idea to working software. The more serious the work becomes, the more painful usage limits become.

The SpaceX side also confirmed the partnership. xAI’s official site says SpaceXAI signed an agreement with Anthropic to provide access to Colossus 1, which it describes as one of the world’s largest and fastest-deployed AI supercomputers.

That detail makes the story even bigger. This is not just Anthropic renting anonymous server capacity. It is Claude getting access to one of Elon Musk’s biggest AI infrastructure projects. Reuters reported that the Colossus 1 facility in Memphis contains more than 220,000 NVIDIA processors and gives Anthropic 300 megawatts of new capacity. Reuters also connected the deal to Anthropic’s growing demand for Claude Code and its enterprise AI products.

The deeper meaning: AI is now an infrastructure war

The biggest lesson from this deal is simple: the AI race is no longer only about who has the smartest model. It is about who has enough chips, electricity, cooling, data-center capacity, and deployment infrastructure to keep those models available.

A model can be brilliant, but if users keep hitting limits, the experience breaks. This is especially true for AI coding agents. Coding agents are expensive to run because they do not simply generate one answer. They inspect repositories, maintain context, run tool loops, generate patches, test results, and revise their own work. Every serious coding session burns compute.

That is why the SpaceX deal is strategic. It gives Anthropic more capacity at the exact moment when OpenAI Codex, GitHub Copilot, and other coding agents are fighting for developer attention. Anthropic is not only trying to make Claude smarter. It is trying to make Claude more available.

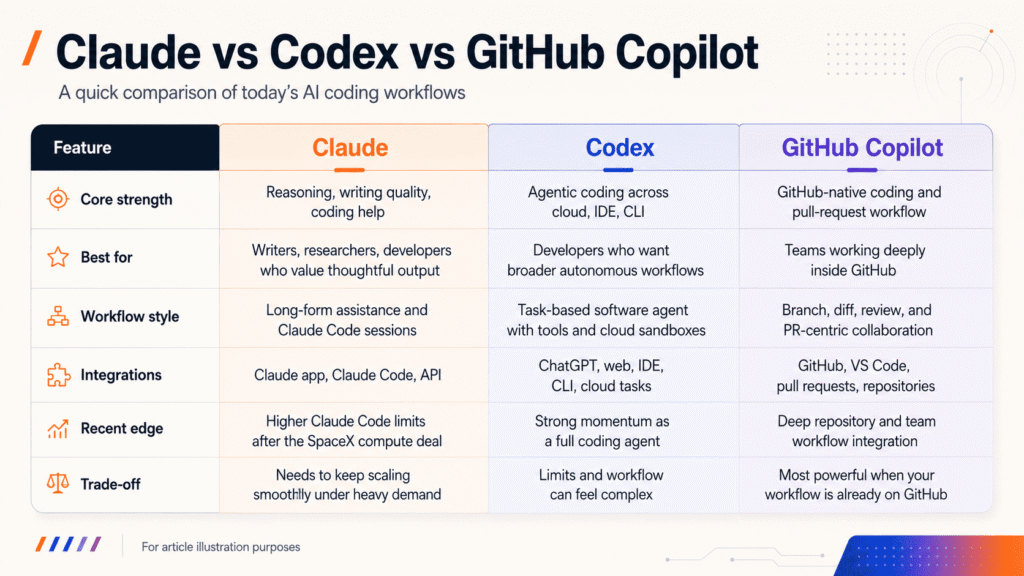

Claude vs Codex vs GitHub Copilot: comparison table

| Feature | Claude after SpaceX deal | OpenAI Codex | GitHub Copilot cloud agent |

|---|---|---|---|

| Main advantage | Strong reasoning, writing, coding judgment, and now higher Claude Code limits | Deep agentic coding workflow across cloud, CLI, IDE, browser, PR review, and apps | Native GitHub workflow with branches, commits, pull requests, and repository tasks |

| Recent usage move | Claude Code five-hour limits doubled for Pro, Max, Team, and seat-based Enterprise plans | Pro offers 5x or 20x higher rate limits than Plus, with temporary double usage on the $100 tier until May 31, 2026 | Usage depends on Copilot plan, premium requests, and GitHub Actions environment |

| Infrastructure angle | SpaceX Colossus 1 capacity with 300+ MW and 220,000+ NVIDIA GPUs | Backed by OpenAI’s own model and product infrastructure | Backed by GitHub and Microsoft developer ecosystem |

| Best user | Claude loyalists, heavy writers, researchers, and developers who want longer Claude Code sessions | Developers who want an agent that can work across code, apps, PRs, browser, terminal, and cloud tasks | Teams already living inside GitHub issues, branches, PRs, and review workflows |

| Weakness | Still has to prove that higher limits stay reliable as demand keeps rising | More complex limit structure across models, local messages, cloud tasks, and reviews | Most powerful when the codebase and workflow are already centered on GitHub |

| Strategic threat | Keeps Claude users from leaving because of limits | Pulls developers into OpenAI’s agent ecosystem | Turns AI coding into a normal part of GitHub software delivery |

Why this deal is about keeping developers inside Claude

Claude’s biggest risk is not that users dislike it. The risk is that users love it, but still leave because another product gives them fewer interruptions. That is where Codex becomes dangerous.

OpenAI describes Codex as a cloud-based software engineering agent that can work on many tasks in parallel. It can write features, answer questions about a codebase, fix bugs, and propose pull requests for review, with each task running inside its own cloud sandbox environment.

That is a major shift. Codex is not trying to be a better autocomplete box. It is trying to become the operating layer for software work. OpenAI has also expanded Codex beyond basic coding. Codex can now operate a computer alongside the user, work with more tools and apps, generate images, remember preferences, learn from previous actions, review pull requests, view multiple files and terminals, connect to remote devboxes through SSH, and use an in-app browser.

This is why some developers are shifting more attention to Codex. They are not only comparing Claude and Codex on answer quality. They are comparing workflows. Which agent can stay with the project longer? Which one can review the pull request? Which one can connect to the remote environment? Which one can handle repeated work? Which one fits naturally into the IDE and cloud workflow?

Anthropic’s SpaceX deal is a direct move to close one of Claude’s biggest gaps: availability during heavy use.

The usage-limit battle is now a product weapon

OpenAI is also competing aggressively on usage limits. Its Codex pricing page says Pro users can choose 5x or 20x higher rate limits than Plus, and the $100 per month Pro tier gets double normal Codex usage until May 31, 2026.

This puts pressure on Anthropic. If Codex gives developers more room to work, Claude Code has to respond. That is exactly what Anthropic is doing by doubling five-hour limits for paid Claude Code users and removing peak-hour reductions for Pro and Max users.

This is not just a technical update. It is a retention strategy. Every time a developer hits a wall in Claude Code, they have a reason to open Codex. Every time Codex successfully completes a task, reviews code, or works inside a cloud environment, the developer becomes more comfortable with OpenAI’s ecosystem. Anthropic needs Claude to feel less restricted, more dependable, and more useful for long sessions.

GitHub is making the fight even harder

GitHub is also changing the battlefield. GitHub says Copilot cloud agent can research a repository, create an implementation plan, make code changes on a branch, let developers review the diff, iterate, and create a pull request when ready. It can also automate branch creation, commit messages, and pushing changes.

This is where the future becomes clear. AI coding is moving from “ask a chatbot for help” to “delegate work to an agent.” The agent does not just suggest code. It creates branches, works on tasks, prepares diffs, and moves software closer to shipment.

That is why Claude needed this SpaceX deal. Claude Code cannot compete only by being smart. It has to compete inside the actual rhythm of software development. Developers want fewer breaks, longer sessions, better context, stronger integrations, and enough compute to keep the agent alive until the job is done.

Why SpaceX wins too

The deal also benefits SpaceX and Musk’s AI infrastructure strategy. Reuters described it as a partnership that supports SpaceX’s AI ambitions and gives it a major outside AI customer. Reuters also reported that SpaceX and Anthropic are exploring the idea of space-based data centers, which turns this deal into more than a short-term compute rental.

That is a powerful business story. Anthropic gets compute. SpaceX gets a serious AI customer. Claude users get higher limits. Developers get longer coding sessions. And the wider AI industry gets a signal that compute capacity is becoming a product in itself.

It also creates an unusual situation where rival AI ecosystems can still depend on each other’s infrastructure. Claude competes with Musk’s Grok in the broader AI assistant market, but Anthropic is still using SpaceXAI’s compute. That tells us how desperate the compute race has become. In AI, even competitors may become customers if the infrastructure is strong enough.

What users will actually feel

For Claude Pro and Max users, the biggest improvement should be reliability. Removing peak-hour reductions means the experience should feel less unpredictable during busy times. For Claude Code users, doubled five-hour limits should make it easier to complete real development sessions without being cut off midway.

For API customers, higher Opus rate limits are even more important. Companies building AI products need throughput. They need to handle more requests, larger workloads, more users, and more agent calls. If Claude Opus becomes easier to scale, more companies can build serious products on top of it.

For Anthropic, the most important outcome is loyalty. Claude already has a strong fan base. But in AI, loyalty can disappear quickly if another tool removes friction. The SpaceX deal helps Anthropic protect Claude users from the biggest reason they might switch: limits.

Final verdict

Anthropic’s SpaceX deal is a major moment in the AI coding war. It gives Claude access to massive Colossus 1 compute, raises Claude Code limits, removes peak-hour reductions for Pro and Max users, and increases Claude Opus API throughput. That directly improves user experience, developer productivity, and enterprise scalability.

But the deeper story is more aggressive. OpenAI Codex is becoming a full agentic work platform. GitHub Copilot is turning AI into a branch-and-pull-request worker. Developers are moving toward tools that do not just answer questions, but actually help ship software. Anthropic knows this, and the SpaceX deal is its strongest answer yet.

Claude just became harder to leave. Codex is still pushing hard. GitHub is turning agents into normal developer infrastructure. The winner will not simply be the company with the best model benchmark. The winner will be the company that gives users the best full workflow: smarter models, more compute, higher limits, stronger integrations, and fewer interruptions.

In simple words, Claude’s SpaceX deal is not just about more GPUs. It is about survival, retention, and power in the next phase of AI. The AI coding war has moved from model demos to real developer productivity, and Anthropic has just bought itself a much stronger seat at the table.